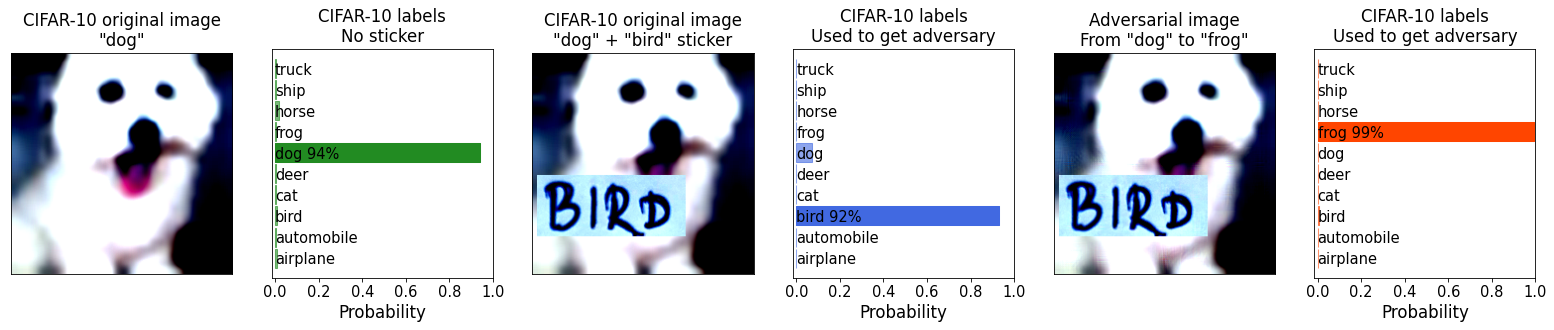

Pixels still beat text: Attacking the OpenAI CLIP model with text patches and adversarial pixel perturbations | Stanislav Fort

Explaining the code of the popular text-to-image algorithm (VQGAN+CLIP in PyTorch) | by Alexa Steinbrück | Medium

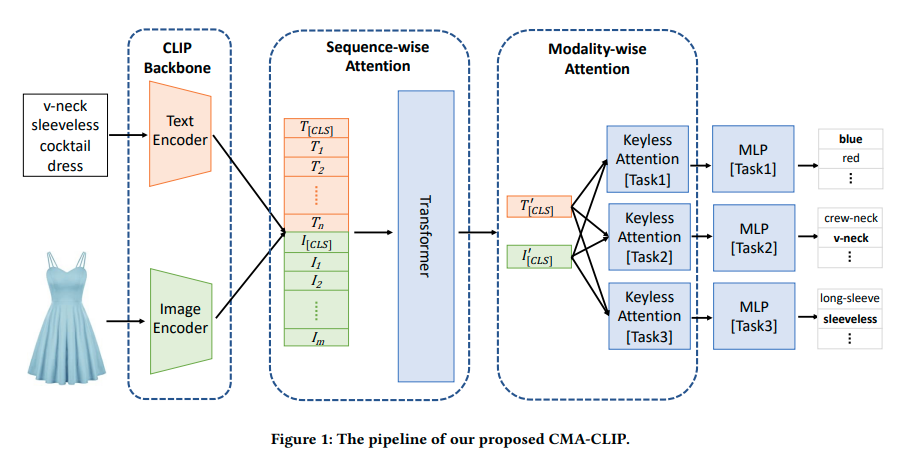

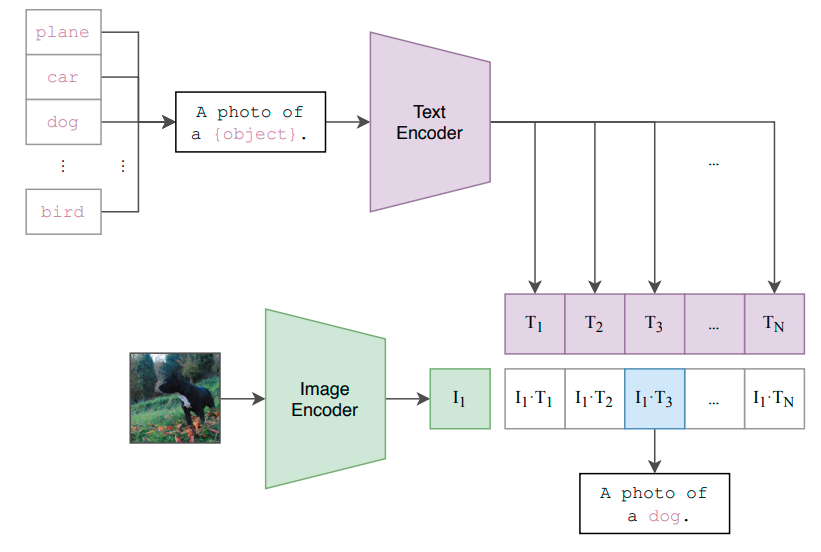

Process diagram of the CLIP model for our task. This figure is created... | Download Scientific Diagram